Since AI is on the map, any chance or want other than myself for local llm support in Xojo?

Then I can have some privacy and test out different models in the IDE.

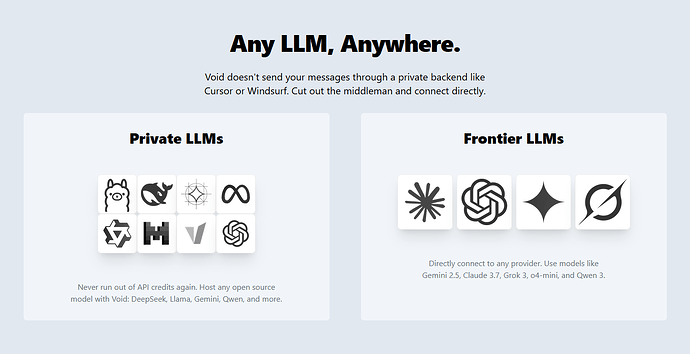

There’s an editor called “Void” that is configurable to use several AI providers, including your own. I hope Xojo follows this path.

You can use it in your projects already

I’m thinking Paul wanted the Xojo AI assistant to be local so it wouldn’t contribute anything on his machine to an external server.

That would be appreciated too ![]()

As Daniel suggested, AIKit will communicate with local models running via Ollama on the local machine or a LAN.

No Ian is right, I’m talking about the built in assistant and not a toolkit or library.

I am going to explore with ollama too.. I have been using this with my python program.

Garry, I assume I can start off with AIKit.. Can I use your kit with API 1 ??

Feel fee to use AIKit. I develop in the latest Xojo version and it’s API 2.0. It may not work in significantly older versions of Xojo. Give it a whirl.