Hello,

So , I have a MSSQL Database server, around 4 GB of data there, I Extract around 400 Mb of data and store that in a DB.

Here is the first issue I get.

Using Windows 10 and ODBC.

When I do the Query, I get the data but on writing to the SQLite DB always on each table first record does not want to store String Columns , no idea why, so I have 23 tables where I need to take them one by one to manually copy / paste the data from the first row, no idea why.

Moving to MacOS , Mac mini M1 I start the processing.

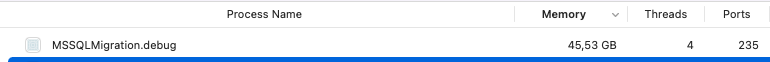

doing a simple query and moving the data from multiple tables to one table I end up after around 190.000 records with a Memory usage of 44,37 GB for my app. Following the standards doing the query, getting the data from each row and saving them on db with the DatabaseRow.

Is that normal ? I mean the speed is super fast for all this processing it took like 3 min but still that memory usage is huge. all this is done in a thread.

Now the 3rd step is another processing where I call a shell to extract the rtf data from DB and convert it to txt using textutil, it goes well so far but the shell is painfully slow and memory keeps increasing , Reached on 10.100 Records from 95000 and memory is already on 45,37 GB apparently each 10k records it grows with 1 GB

Is this a memory leak or something in the DB side that is wrong ?

Latest macOS Monterey and XOJO 2021R2.1

Thanks.